The Abstraction of Value and the Value of Abstraction

A 25-Year Thesis on the Migration Patterns of Technology, Capital, and Talent

There is a duality at the heart of technological progress that offers deep insights on the migration of economic value as a result of the advancement of technology. This is the interplay between the abstraction of value and the value of abstraction. The first pattern describes how technology ecosystems progressively separate into commoditized lower layers and high-value upper layers, with value migrating relentlessly upward as the building blocks get standardized. The second pattern is its reciprocal: as machines take over more of the execution, the humans who operate at the highest levels of abstraction (framing problems, exercising judgment, making decisions under ambiguity) capture a disproportionate share of the rewards. Value gets abstracted upward. And abstraction itself becomes more valuable.

Value gets abstracted upward. And abstraction itself becomes more valuable.

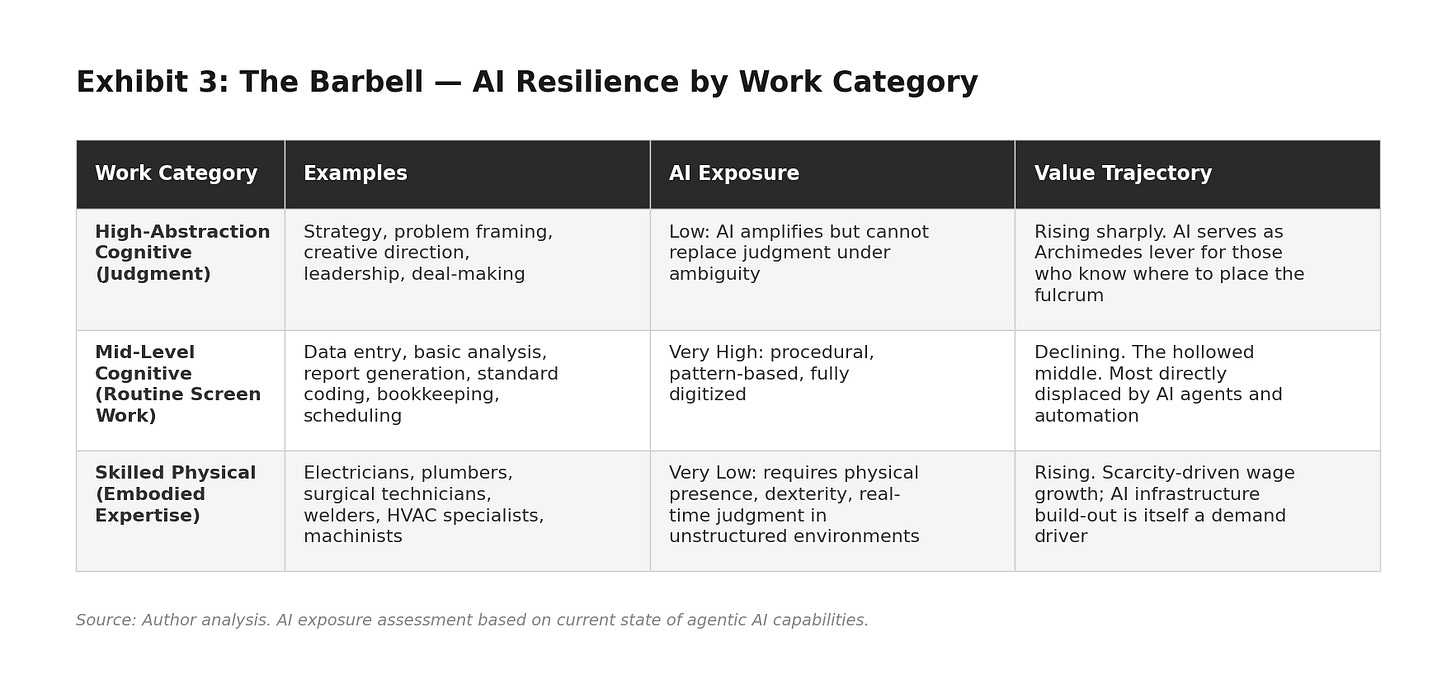

This duality is not merely an observation about technology stacks. It is a structural force reshaping labor markets, competitive dynamics, and the very definition of valuable work. In 2026, AI agents can write code, draft contracts, generate analyses, and orchestrate multi-step business workflows. The abstraction frontier has advanced to the point where natural language is the new programming interface and human intent, not human execution, is the scarce input. The consequence is a barbell economy: value is concentrating at two ends. At one end, strategic judgment, creative direction, and deep domain expertise command growing premiums because AI amplifies their leverage. At the other end, skilled physical work (electricians wiring data centers, plumbers building infrastructure, surgical technicians in operating rooms) is surging in demand precisely because it resists digital substitution. The middle, where routine cognitive work done in front of a screen lives, is being hollowed out.

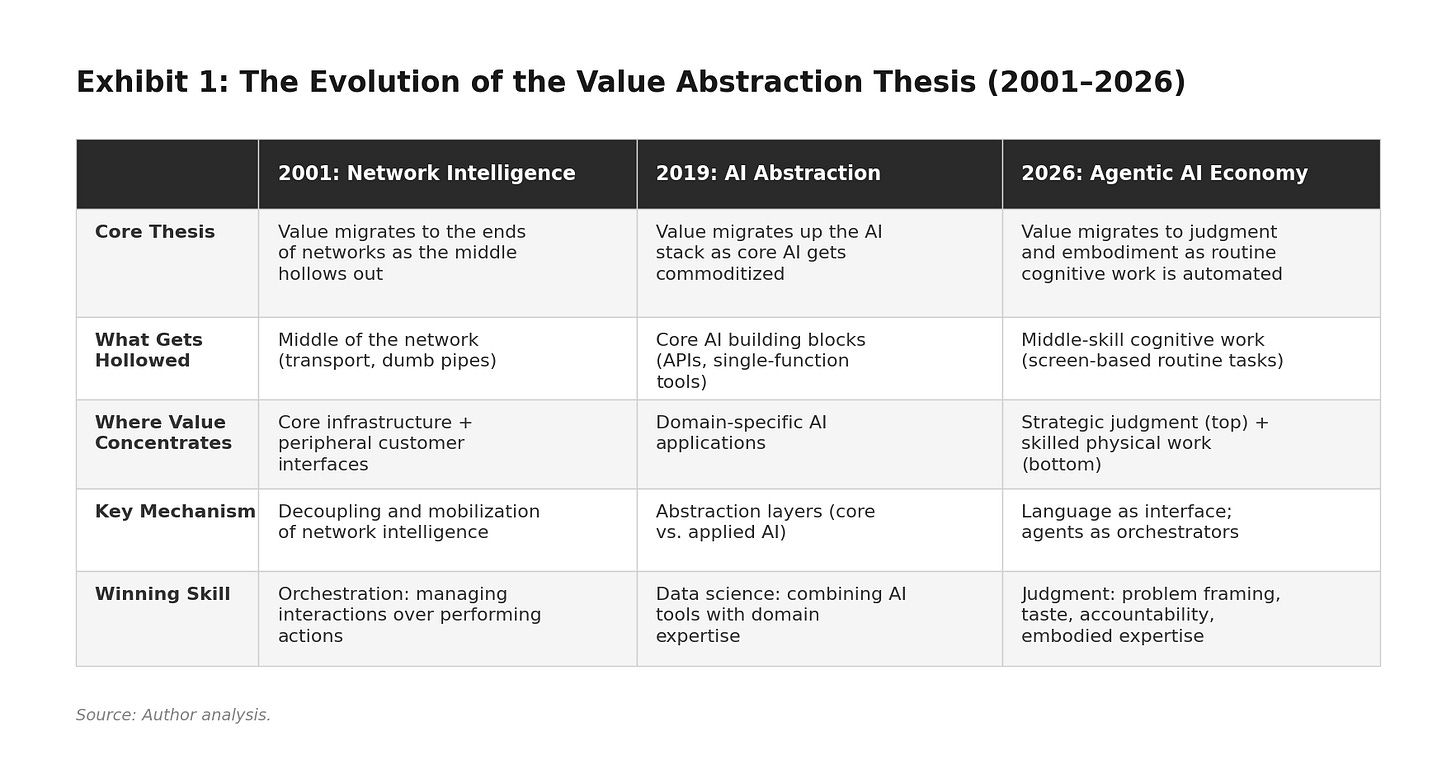

What gives me confidence in this framework is not just its explanatory power today. It is the fact that I have been developing it, in different forms, for a quarter century. The core logic, that the middle gets hollowed out and value migrates to the ends, first appeared in a Harvard Business Review article I co-authored in January 2001. The abstraction duality took shape in a piece I wrote around 2019. And the barbell extension, connecting the thesis to labor markets and skilled trades, is what this essay contributes. Three iterations, three different substrates, one persistent structural pattern. That kind of durability across wildly different technological eras is, I believe, the strongest evidence that the pattern is real.

A 25-Year Intellectual Thread

Let me trace the thread. Each iteration applied the same structural logic to a different substrate, and each time the pattern held.

In 2001, the substrate was networks. The argument was that as digital networks became faster and more ubiquitous, intelligence would decouple from the middle of the network and concentrate at the ends: shared, scalable infrastructure at the core and highly customized interfaces at the periphery. Telecom carriers (the “dumb pipes” in the middle) would lose value to infrastructure providers like Cisco and customer-interface companies like Yahoo!. Inside organizations, the same pattern would hollow out middle management: leadership intelligence would centralize at the top while decision-making intelligence would push to frontline employees. The critical insight was that in a networked world, more money can be made in managing interactions than in performing actions.

In 2019, the substrate shifted to AI ecosystems. I described how AI development was partitioning into core AI (platforms and tools built by technology giants) and applied AI (business applications). As core AI got standardized and democratized, value migrated upward to the applied layer. A few platform providers would capture value from building blocks, but the vast proportion of value would be created by businesses that focused on the “so what” and the “now what” of AI. The reciprocal held as well: as execution got automated, humans who worked at higher levels of abstraction (cognitive, strategic, creative) captured more value than those who worked at lower levels (physical, procedural).

In 2026, the substrate is the economy itself. The decoupling and mobilization patterns I identified in networks are now playing out in labor markets, skill distributions, and the competitive structure of entire industries. The hollowing of the middle is no longer a metaphor about telecom pipes or network topology. It is a lived reality for millions of knowledge workers whose routine cognitive tasks are being absorbed by AI agents. And the value-at-the-ends pattern has taken a form I did not fully anticipate: a barbell where strategic judgment on one end and skilled physical work on the other emerge as the most AI-resilient categories of human contribution.

The Dual Meaning of Abstraction

Abstraction, in its simplest form, is the process of hiding complexity behind a simpler interface. When you use a calculator, you do not think about binary arithmetic. When you call an API, you do not care how the underlying service works. Each layer of abstraction lets the layer above it operate more efficiently by ignoring the details below.

The abstraction of value describes how technology ecosystems progressively separate into lower layers (infrastructure, platforms, building blocks) and higher layers (applications, workflows, business solutions). As the lower layers get standardized and commoditized, value migrates upward to the layers that solve real business problems. This is the “standing on the shoulders of giants” effect. Every generation of technology creates a new floor upon which the next generation builds.

The value of abstraction is the reciprocal: as more of the execution gets automated, the humans who work at the highest levels of abstraction (framing problems, exercising judgment, making decisions under ambiguity) capture a disproportionate share of the economic value. Throughout history, as societies advance, value shifts from physical and concrete forms of labor to cognitive and abstract forms.

Both halves of this duality are more powerful today than when I first described them. But both also need updating, because the AI revolution has introduced dynamics that the original frameworks did not anticipate.

The Abstraction of Value: From Two Layers to Four

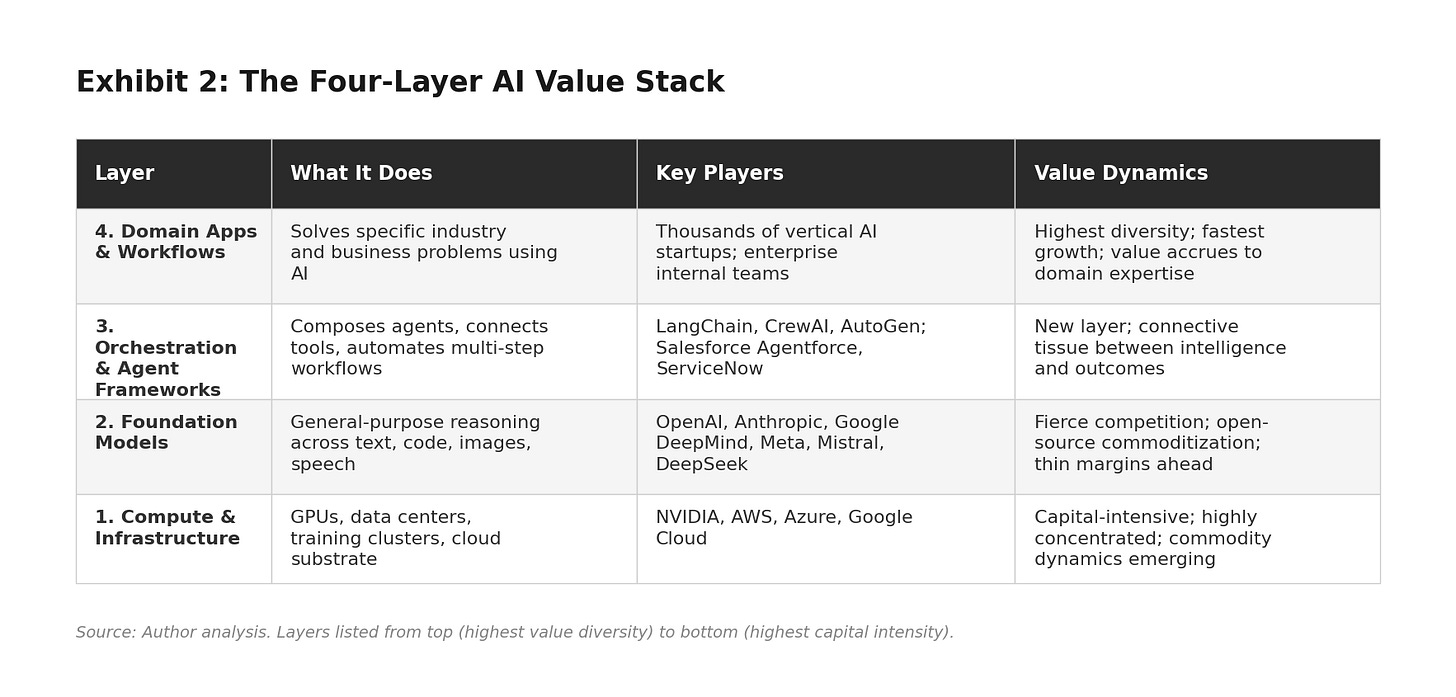

When I originally wrote about abstraction in AI, the ecosystem could be described in two layers: core AI (platforms and tools) and applied AI (business applications). That was a reasonable map of the world circa 2019. It is inadequate for 2026. Today, the AI value stack has at least four distinct layers, each with its own competitive dynamics and value capture logic.

Layer 1: Compute and Infrastructure. This is the physical foundation: GPUs, data centers, training clusters, and cloud infrastructure. NVIDIA dominates the chip layer. The hyperscalers (Amazon, Microsoft, Google) provide the compute substrate. Jensen Huang has called the current AI infrastructure build-out “the largest in human history.” This layer is capital-intensive, concentrated, and increasingly strategic, but it faces commodity dynamics as competition intensifies.

Layer 2: Foundation Models. This layer did not exist in its current form when I wrote the original abstraction piece. Foundation models from OpenAI, Anthropic, Google DeepMind, Meta, and Mistral are general-purpose reasoning engines that handle text, images, code, speech, and structured data through a single interface. They are not task-specific APIs. They are general-purpose minds that can be directed toward any task. Open-source models (LLaMA, Mistral, DeepSeek) create powerful commoditization pressure against closed frontier models.

Layer 3: Orchestration and Agent Frameworks. This is the genuinely new layer. Agent frameworks like LangChain, CrewAI, and AutoGen, along with enterprise platforms like Salesforce Agentforce and ServiceNow, allow organizations to compose AI agents that use tools, access databases, invoke APIs, and execute multi-step workflows. This is the connective tissue between raw model intelligence and real business outcomes. In my 2001 HBR article, I identified orchestration as the highest-value role in a networked world: “more money can be made in managing interactions than in performing actions.” Twenty-five years later, agent orchestration is proving exactly that.

Layer 4: Domain-Specific Applications and Workflows. This is where the original thesis lands. Insurance fraud detection. Clinical trial matching. Contract automation. Marketing campaign optimization. The difference from 2019 is that these applications can now be built dramatically faster (because of Layers 2 and 3) and by a much wider range of builders (because of the abstraction leap described below). Gartner projects that by 2026, 75% of new enterprise applications will be built using low-code or no-code technologies, and the combined market for these platforms will exceed $44 billion.

The pattern across these four layers confirms the original thesis but with sharper teeth. Value is migrating relentlessly upward. The companies building applications on top of foundation models and agent frameworks capture enormous value, often with surprisingly small teams. The foundation model layer, despite all the attention it receives, may end up with thin margins because of open-source competition and aggressive pricing wars. Sir Isaac Newton’s observation still applies, but with a twist. We are no longer standing on the shoulders of a single giant. We are standing on a four-story building, and each floor is getting taller.

The NVIDIA Paradox: Build-Out Economics vs. Steady-State Economics

A sharp reader will raise an obvious objection at this point: if lower layers get commoditized, why is NVIDIA, the quintessential Layer 1 company, the most valuable in the world? The hyperscalers are printing money from compute. Infrastructure players seem to be capturing the lion’s share of AI value. Does this not contradict the framework?

It does not, but the distinction requires care. The framework describes equilibrium dynamics, not transition dynamics. During every major infrastructure build-out, the picks-and-shovels players capture extraordinary value. This is a pattern with 150 years of precedent. The railroad companies minted fortunes in the 1870s and 1880s; in the steady state, many went bankrupt while the companies that used the rails (Standard Oil, Sears Roebuck, the meatpackers) captured the durable value. The telecom equipment makers dominated the late 1990s; Cisco hit the #1 global market cap in March 2000. Within 18 months, it had lost 80% of its value and has never recovered in real terms. The durable value migrated to Google, Amazon, Apple, and Facebook: companies that built applications and customer relationships on top of the infrastructure. The cloud build-out rewarded AWS and Azure handsomely, but their highest-margin services today are not raw compute (which faces relentless price competition) but managed AI services, developer platforms, and orchestration tools at higher layers.

We are in the construction phase of AI infrastructure, and construction phases always reward the suppliers of scarce inputs. The question is not whether NVIDIA is capturing value today. It obviously is. The question is whether that capture is structural or cyclical. The competitive forces that will compress Layer 1 margins are already visible: Google’s TPUs, Amazon’s Trainium chips, AMD’s MI300 series, and a wave of custom silicon from Microsoft, Meta, and startups.

But there is a subtler point that actually reinforces the framework: NVIDIA’s real moat is not at Layer 1. It is at Layer 3. NVIDIA’s dominance comes less from manufacturing GPUs than from CUDA, the software ecosystem that locks developers into NVIDIA’s hardware. CUDA is an orchestration layer: a programming framework, a library ecosystem, and a developer community that makes building AI workloads on NVIDIA hardware dramatically easier than on any alternative. The company that looks like an infrastructure play is actually a platform play disguised as a chip company. Jensen Huang understands this; it is why NVIDIA invests as heavily in software as in silicon. In this reading, NVIDIA is not a counterexample to the value abstraction thesis. It is a confirmation: the most successful infrastructure company in history has succeeded precisely by migrating upward through the stack.

The Anthropic and OpenAI Data Point

Perhaps the most telling evidence of value migration comes from the two leading frontier model companies themselves. If the foundation model layer (Layer 2) were the durable value capture point, the rational strategy would be simple: sell API tokens, improve the model, defend the capability lead. Instead, both Anthropic and OpenAI are racing upward through the stack as fast as they can.

Anthropic’s trajectory is especially instructive. The company built one of the world’s most capable foundation models in Claude. But its fastest-growing product is not model access. It is Claude Code: an agentic coding tool that orchestrates model intelligence into real development workflows, reading codebases, writing code, running tests, submitting pull requests. Claude Code crossed $1 billion in annualized revenue within six months of general availability. Anthropic also developed the Model Context Protocol (MCP), an open standard for connecting AI agents to external tools and data sources. MCP is an explicit play to own the protocol layer of AI orchestration, analogous to what HTTP did for the web. Giving away the protocol for free only makes sense if the value capture happens at the layers above. Add the Agent SDK and multi-agent frameworks, and the picture is clear: Anthropic is climbing from Layer 2 toward Layers 3 and 4 at speed.

OpenAI is making the same migration with different emphasis. ChatGPT is a consumer application (Layer 4). The enterprise partnerships with Bain and PwC are application-layer plays. The $200-per-month Pro tier is priced on workflow value, not token cost. Both companies are telling you by their actions that they do not believe the model layer is where durable value lives. When DeepSeek produced frontier-competitive models at a fraction of the cost in early 2025, it demonstrated what the framework predicts: open-source commoditization pressure is compressing Layer 2 margins, and the smart money is migrating upward.

The New Abstraction Frontier: Language as Interface

The most consequential shift since my original articles is not just that there are more layers. It is that the interface between humans and machines has been fundamentally transformed.

Consider the progression. In the 1950s, programmers wrote machine code: raw binary instructions. By the 1970s, high-level languages like C expressed logic in something closer to human language. By the 2000s, APIs let developers invoke complex services with a single function call. By the 2010s, low-code and no-code platforms let non-programmers build applications through visual interfaces. Each step was a leap in abstraction, allowing humans to express intent at a higher level while the machine handled implementation.

The AI era has taken another leap, perhaps the most significant yet: natural language is now the programming interface. When a developer uses Claude Code or Cursor, they describe what they want in plain English. The AI agent reads the codebase, writes the code, runs tests, debugs failures, and submits a pull request. The developer’s job is not to implement. It is to direct, review, and exercise judgment. Claude Code went from research preview in early 2025 to general availability by May of that year, crossing $1 billion in annualized revenue within six months. A Google principal engineer noted at a developer meetup in January 2026 that Claude replicated a year of architectural work in a single hour.

This is my 2001 thesis in its most extreme form. The decoupling of intelligence has advanced to the point where the primary human contribution is no longer writing the code. It is knowing what to build and why. The mobilization of intelligence has advanced to the point where natural language serves as the universal protocol I envisioned, replacing the XML and WAP standards I discussed in the HBR article. The abstraction of value has climbed from the physical layer (machine code) past the procedural layer (APIs) to the intent layer (natural language). And the value of abstraction has climbed in lockstep.

The Value of Abstraction: From Execution to Judgment

My original 2019 article concluded that value was shifting from people who work with their hands to people who work with their minds. That was broadly true, and it remains true as a general trend. But the AI revolution has added a crucial nuance: within cognitive work itself, value is shifting from execution to judgment.

This is the argument I have been developing in my recent writing on AI and the future of work. In my AI-Proof series, I introduced the concept of skill security: the idea that your resilience in an AI-transformed economy depends not on the tasks you perform but on the judgment you exercise. AI can generate code, draft contracts, write marketing copy, and produce financial analyses. What it cannot do is decide which problem is worth solving, navigate the politics of getting a solution adopted, take accountability for an outcome, or make the taste-based calls that separate good work from great work.

In a related essay, Mind the Gap, I argued that AI is compressing the middle of the skill distribution. It dramatically elevates the capabilities of novices (giving a junior analyst the research output of a senior one) while offering comparatively less uplift to deep experts (who already know the right answers). The result is a barbell: deep expertise at the top and AI-augmented generalists at the bottom, with the middle getting squeezed.

I think of it through the metaphor I have been using in my executive education work. AI is an Archimedes lever: it amplifies the force you apply. But a lever is only as good as the person choosing where to place the fulcrum. The value of abstraction is no longer about being able to operate the lever. It is about knowing where to put it.

The Surprise: The Return of Skilled Manual Work

Here is where the framework yields an insight I did not anticipate when I wrote either of the earlier pieces. In 2019, I wrote that “people who work with their hands make a lot less money than those who work with their minds and keyboards.” In 2001, I noted that the hollowing of the middle applied to middle management, whose information-transport function was being replaced by networks. Both statements were true in their time. But if AI is now coming for “any work done in front of a screen,” then the most AI-resilient work is, by definition, work that cannot be done in front of a screen.

The data is striking. According to Randstad’s analysis of over 50 million job postings, demand for robotics technicians has jumped 107% since late 2022. HVAC engineer demand increased 67%. Construction roles grew 30%. The U.S. construction industry needs 530,000 additional workers in 2026 alone. NVIDIA CEO Jensen Huang has called the AI infrastructure build-out a massive job creator for plumbers, electricians, and steel workers, and noted at the World Economic Forum that wages for these roles are climbing into six figures. Mike Rowe, who has long championed the trades, recently reported meeting three electricians under 30 earning between $240,000 and $280,000 per year. The U.S. Department of Labor announced $145 million in apprenticeship grants in 2026 targeting shipbuilding, defense, semiconductors, and energy.

There is a deep irony here. AI disrupts cognitive-procedural work (the kind done on screens) far more easily than it disrupts skilled manual work. You can automate a data analysis pipeline, but you cannot automate a plumber diagnosing a leak behind a wall, an electrician wiring a data center, or a surgical technician assisting in an operating room. These jobs require physical presence, manual dexterity, real-time judgment in unstructured environments, and embodied expertise that current AI systems fundamentally lack. Every new AI data center, every electric vehicle charging station, every solar panel installation requires human tradespeople to build, install, and maintain the physical infrastructure. The AI revolution is, paradoxically, one of the greatest demand drivers for skilled manual labor in a generation.

The value of abstraction, it turns out, is not a one-dimensional ladder from physical to cognitive. It is more like a barbell. At one end, value accrues to the highest levels of cognitive abstraction: strategic judgment, problem framing, creative direction. At the other end, value is returning to skilled physical work that resists digital substitution. The middle, where routine screen-based cognitive work lives, is where the disruption bites deepest.

Implications for Business Leaders

The updated framework yields four strategic imperatives.

First, invest in the orchestration layer. The companies that will capture the most value in the AI era are not those that build foundation models (too capital-intensive, too concentrated) or those that sell raw compute (commodity dynamics). They are the ones that master the orchestration of AI agents, tools, and workflows to solve business problems. In 2001, I wrote that value in a networked world accrues to orchestrators, not performers. That principle has only intensified. The orchestration layer of the AI stack (Layer 3) is where strategic advantage is built.

Second, retrain for judgment, not execution. Every training dollar spent teaching employees to perform tasks that AI can automate is a dollar with diminishing returns. The highest-return investment is in developing the judgment, domain expertise, and problem-framing skills that make humans irreplaceable orchestrators of AI systems. For two decades, the mantra was “learn to code.” That advice is not wrong, but it is incomplete and increasingly misleading if taken literally. The more important skill is learning to think at the right level of abstraction.

Third, treat AI fluency as a leadership competency. The most effective leaders in an AI-first world will not be those who delegate AI to their technology teams. They will be the ones who understand the abstraction stack well enough to make strategic bets about where to invest, what to build versus buy, and how to organize their firms around AI-augmented workflows.

Fourth, rethink your assumptions about the labor hierarchy. If your workforce strategy assumes that cognitive desk work is always more valuable than skilled manual work, you are operating on an outdated mental model. The AI economy rewards both ends of the barbell. The surgeon and the plumber, the CEO and the electrician, the AI strategist and the welder are all doing work that AI, for all its power, cannot reach. Smart organizations will invest in both ends.

What the Abstraction Thesis Tells Investors

The value abstraction thesis is not investment advice. But it does offer a structural lens for three questions that matter enormously for capital allocation: at which layer of the stack is value most durable, how long does each investment window last, and what signals indicate that value is migrating?

Layer 1: Compute and Infrastructure. The investment window is now through roughly 2027-2028. This is the picks-and-shovels phase, and the returns have been extraordinary. But history suggests infrastructure advantage windows last 5-7 years from the initial demand surge. We are about three years in from the ChatGPT moment. Custom silicon is already eroding pricing power on inference workloads. Training demand will sustain NVIDIA longer, but inference is the larger market in the long run, and it is heading toward commodity economics. The signal to watch: when inference costs decline faster than inference demand grows, the margin compression has begun.

Layer 2: Foundation Models. The pure-play model exposure window is already narrowing. DeepSeek, LLaMA, Mistral, and Qwen are compressing the capability gap from years to months. API pricing has fallen by over 90% in two years. The model layer will sustain value for companies that successfully migrate upward (as Anthropic and OpenAI are doing), but for pure model providers, margins will compress toward the economics of cloud databases: meaningful but not spectacular. The signal to watch: the share of revenue from raw API tokens versus tools, applications, and platform services. A rising ratio of the latter confirms upward migration.

Layer 3: Orchestration and Agent Frameworks. The investment window is opening now and likely remains attractive through 2028-2032. This is the least crowded and most strategically important layer. Orchestration layers tend to become sticky standards, because once enterprises wire their workflows through a platform, switching costs are enormous. Think of what Salesforce did for CRM or AWS for cloud. The winners at Layer 3 could sustain value capture for a decade or more. The signal to watch: developer adoption metrics, enterprise deployment breadth, and protocol standardization. Which orchestration platforms are becoming the default wiring for AI workflows?

Layer 4: Domain Applications and AI-Native Enterprises. The longest runway, but also the most patient capital required. This is where the JP Morgans, Walmarts, UnitedHealths, and a generation of new AI-native companies enter the picture. Large incumbents with proprietary data, deep customer relationships, and organizational capability to deploy AI at scale will capture enormous value, but it will take time for this to show up in earnings. The signal to watch: “boring AI” earnings beats, when traditional enterprises report margin expansion or revenue growth explicitly attributed to AI-driven operational improvements.

The investment punchline is provocative but historically grounded: the market is currently priced for the build-out phase to be the permanent state. History says it never is. The biggest AI value creators of 2033 may be “boring” incumbents that nobody currently thinks of as AI companies, just as the biggest internet winners of 2010 (Amazon, Apple) were not the companies getting the most internet hype in 1999. The investor who positions for the steady state, gradually shifting from infrastructure exposure toward orchestration platforms and domain-rich enterprises, is making the same structural bet that paid off in every prior technology cycle. The timing is the hard part. Too early and you endure years of underperformance. Too late and the repricing has happened. My estimate for the inflection point is 2027-2028, when infrastructure growth decelerates and application-layer value becomes visible in earnings.

The Method in the Madness

There is a paradox in trying to make sense of a world that changes as fast as ours does. The half-life of any specific prediction about AI is measured in months. Models that were frontier six months ago are commodities today. Companies that seemed invincible a year ago are scrambling to reinvent themselves. In this environment, the temptation is to throw up your hands and declare that prediction is futile, that strategy is a fool’s game, that the best you can do is react. I wrote almost exactly those words in the opening of my HBR article in 2001, describing the conventional wisdom I wanted to challenge. Twenty-five years later, the same defeatism is back, louder and more fashionable than ever.

I want to push back on it with a simple observation: the further back we can look, the more confidently we can peer into the future. The surface of technological change is turbulent and unpredictable. But beneath the surface, structural patterns repeat with remarkable fidelity. The hollowing of the middle. The migration of value to the ends. The commoditization of infrastructure. The rising premium on orchestration and judgment. These patterns have held across railroads, electrification, telecommunications, the internet, cloud computing, and now AI. Six transitions over 150 years, each with different technologies, different players, different timelines, but the same underlying architecture of value migration. That kind of durability across wildly different eras is not coincidence. It is structure.

This is the idea at the heart of my Substack, The Hidden Weave: that beneath the chaos of technological disruption, there are durable patterns that connect seemingly unrelated phenomena, patterns that become visible only when you look across decades rather than quarters. The abstraction thesis is one such pattern. The barbell pattern in labor markets is another. The value-at-the-ends architecture is a third. They are all expressions of the same underlying weave, hidden in plain sight for those willing to zoom out far enough to see it.

Isaac Newton’s metaphor about standing on the shoulders of giants has guided this framework across all three iterations. The nature of the giants keeps changing. In 2001, they were digital networks. In 2019, they were AI platforms. In 2026, they are foundation models and agent frameworks. But the act of standing on their shoulders, of knowing where to look and what to build, remains the highest-value human contribution. The value of abstraction has never been higher. And for those who can see the weave beneath the surface, the abstraction of value has never offered more opportunity.

In 2019, I included a thought experiment: if the Earth were destroyed today and humans had to rebuild everything, physical labor would be enormously valuable. I wrote it as a hypothetical. In 2026, we are in fact rebuilding the world’s infrastructure for an AI-powered economy, and we desperately need people who can do the building. The surgeon and the plumber, the CEO and the electrician, the AI strategist and the welder: they all do work that no model can reach. The old hierarchy of mind over matter is giving way to a new one: judgment and embodiment over routine. That is where value lives now. And if the pattern holds, as it has for 25 years and counting, that is where it will live for a long time to come.

This essay is the third iteration of a thesis first developed in “Where Value Lives in a Networked World” (Harvard Business Review, January 2001, with Deval Parikh) and continued in “The Importance of Value Abstraction in Artificial Intelligence for Business Leaders” (circa 2019). It connects to the author’s AI-Proof series, the Mind the Gap thesis, and the Archimedes Lever metaphor for human-AI collaboration. The analysis of specific companies and investment layers reflects structural patterns, not investment advice. All essays are available on The Hidden Weave.